Voice Search Overview

Voice search is here to stay and will only be gaining momentum as we proceed into the future and for those that are in marketing or SEOs, it is important to stay up to date with these features and optimize accordingly.

The processes behind voice and text search are quite different. Voice search queries may be longer and more complex, as people tend to ask questions in a conversational style, while text queries are typically shorter and more direct.

Another difference is in the way search results are presented. In text search, results are typically displayed on a search engine results page (SERP), with a list of links and brief descriptions. In contrast, voice search typically provides only the most relevant result, read aloud by a virtual assistant or smart speaker; such as Apple Siri, Amazon Alexa, Google Assistant and Microsoft Corona. This means that optimizing for voice search requires a different approach to traditional SEO, with an emphasis on providing clear, concise answers to common voice questions.

Searching by sound is an SEO component that cannot be overlooked and with the accelerating advancements in artificial intelligence, it is imperative that web developers and SEOs keep a watchful eye on this evolving technology.

The Statistics

As of the writing of this article, 32% of people between the age of 18 and 64 use a voice search medium (Alexa, Siri, Corona, etc.) and that number will only grow as we move to the future.

Entering standard text search queries on mobile devices is commonplace, with over 60% of cell phone users text searching and 57% of mobile users taking advantage of voice search.

It should be no surprise that Google is the most successful interpreter of audio searches with a 95% accuracy.

In a study in 2021, 66.3 million households in the US were forecasted to own a smart speaker and that forecast has become a reality as of 2023.

Voice technology stretches beyond search queries as 44% of homeowners use voice assistants to turn on TVs and lights, as well as an array of other smart home devices currently on the market.

With statistics as these, speaking to robotic assistants is here to stay and will only be growing with new technologies as we proceed through the 2020s and beyond.

How Does Voice Search Work?

The Physics Behind It

If you just need to know that there is an analog-to-digital conversion and are not interested in the specifics of how it’s done, you can skip this part and go to the next section, which is “Where Does the Data Come From?“.

We will summarize the process of how the sound of human speech is converted into machine language, which is filtering and digitizing.

Filtering: Smart speakers and voice assistants are designed to recognize the human voice over background noise and other sounds; hence, they filter out negative sounds so that they can only hear our voices.

Digitizing: All sound is naturally created in analog frequencies (use of sinewaves). Computers cannot decode analog frequencies. They must be converted to the computer language of binary code.

Below are the details of how an analog signal is converted to digital.

The Analog Conversion Process

|n order to make this conversion, an Analog-to-Digital Converter (ADC) is required. The ADC works by sampling the analog signal at regular intervals and converting each sample into a digital value.

The steps are as follows:

-

- Sampling: The first step is to sample the analog signal at a fixed interval. The sampling rate must be high enough to capture all the frequencies of interest in the analog signal. The Nyquist-Shannon sampling theorem states that the sampling rate must be at least twice the highest frequency in the signal. Sampling means taking regular measurements of the amplitude (or voltage) of the signal at specific points in time and converting those measurements into a digital signal. Sampling is necessary in order to convert analog sound waves into digital signals, which are easier to store, transmit, and process using digital systems such as computers or microcontrollers. The rate at which the analog signal is sampled, known as the sampling rate or sampling frequency, is important because it determines the level of detail that can be captured in the digital signal. Sampling an analog signal is an important step in converting it to a digital signal that can be analyzed, manipulated, or transmitted using digital systems.

- Quantization: Once the analog signal is sampled, the next step involves assigning a digital value to each sample based on its amplitude. The resolution of the quantization process is determined by the number of bits used to represent each sample. The higher the number of bits, the greater the resolution of the digital signal.

- Encoding: The final step is to encode the quantized samples into a digital format. This can be done using various encoding techniques such as pulse code modulation (PCM) or delta modulation.

Overall, the main process of converting analog to digital frequencies involves sampling, quantization, and encoding. The resulting digital signal can then be processed using digital signal processing techniques.

In summary: Smart speakers and voice assistants take in the audio from a person’s speech and convert it to machine language.

Where Does the Data Come From?

Information gathered from smart speakers and voice assistants pulls data from an aggregate of sources.

If you want your business to grow, you must be attentive to where content for voice search is collected so that you can make intelligent decisions regarding how to optimize for these devices.

Amazon Alexa

When Alexa responds to a query, it relies on Microsoft’s Bing search engine for the answer. Why? Because Amazon, as well as Microsoft, are in direct competition with Google, even though Google has the most popular search engine in the world.

Amazon’s refusal to use Google for audio responses is not something to be concerned about. After all, Bing’s search algorithms are very similar to Google’s.

With that said, if a person speaks to Alexa with a specific request, (e.g. “What’s the weather today?”), Alexa can pull that information from a database associated with that request. In this case, Alexa will connect to Accuweather. The device can access Wikipedia and Yelp if it needs to as well.

Apple Siri

Initially, Apple used Bing as its default search engine, but in 2017, Apple partnered with Google. Now, when you say “Hey Siri”, you can expect Siri to access the immense data repository from Google and supply the answer. This applies to the Safari browser for text searches as well.

There is a caveat though. When it comes to local business searches, Siri will call on Apple Maps data and will use Yelp for review information.

Microsoft Cortona

This one is probably the most straightforward out of all of the search engines, as Cortona relies on what else but Microsoft Bing for its information.

Google Assistant

OK, this one’s a no-brainer. Google can currently index trillions of pages to retrieve information and since this also applies to Apple’s Siri, this section is of most importance if you want to optimize voice search for these voice assistants.

In most cases, Google and Siri will read from Google’s featured snippet.

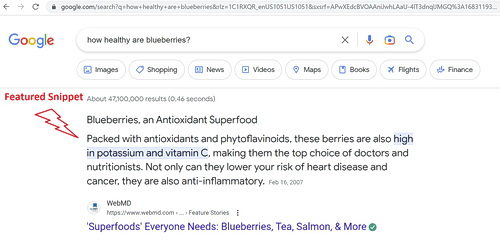

So What is a Featured Snippet?

Featured snippets are what you see after you run a Google search query. It is a paragraph that appears at the top of the page that summarizes the answer to a question.

The information that Google applies to the snippet is gathered from, what Google determines to be the most reliable source (website) for that information.

How Does Google Determine a Featured Snippet?

For a snippet to be posted by Google, it needs to know that the source is trustworthy via its domain authority, link juice and high-quality content, to name three important organic factors, as any SEOs would already know, but in addition to these factors, Google will defer to “HowTo” and FAQ pages most often to pull in the snippet.

Is Structured Data Needed?

Structured data is extra code that helps Google better understand what the page or parts of the page are about.

One might wonder if structured data has to be used in order to provide the featured snippet? The answer is no. As per Google, as long as the web page is optimized properly and contains the questions that equate to the user’s query or voice search in this case, structured data is not necessary; however, if it wouldn’t hurt to put it in, as we all are aware that nothing is static in the SEO world and this rule can easily change in the future.

The reason why Google focuses on “HowTo” and FAQ pages is that their content reflects that of human speech. For example, an FAQ page on EV cars may have the question “How long do EV batteries last?” – That is exactly how a person would ask a voice assistant that same question!

An ‘Action’ for Google Assistant is created, equivalent to an Alexa Skill and Google will read the snippet back to the user to answer the question he/she asked.

Summarizing Optimization for Voice Search

Alexa

Bing: If you have not already done so, bring Bing into your scope of work for SEO and start optimizing for this search engine.

Yelp: We all know that reviews are of the utmost importance, so check out Yelp for your or your client’s business and build on those reviews! Legitimately of course.

Siri

Google SEO: If you are already optimizing for Google’s search, just keep up the good work.

Apple Factors: Where you might not be fully optimized is with Apple Maps, so get going. Start by registering with Apple Business Connect.

Yelp: And now Yelp is back in the picture!

Cortona

Bing: As mentioned, become an SEO Bing expert and you are ready to ask Cortona anything.

Besides the standard organic optimization, focus on schema markup for HowTo and FAQ articles for voice search, which, if you’re lucky, will be shown on the SERP as a featured snippet.

There you have it. How to optimize for voice search. Let’s get these robots configured so that our businesses will be the first thing you hear from your voice assistant!