Walk down any city construction site and you’re bound to see a network of steel beams and columns rising from the ground. Why are they using steel? Because steel is strong, durable, and easy to work with. It is the iron alloy of choice for building construction.

If you’re wondering how steel is manufactured, wonder no more! In this blog post, we’ll explain the process from start to finish.

History of Steel

The emergence of steel can be traced back to the Iron Age when it is used to make swords. History experts say that the original creators of steel were the Hittites, a middle-eastern civilization that existed during the Bronze Age and later into the Iron age, between 1400 and 1200 B.C. in what is now Syria and Turkey. They learned that by heating iron with carbon, a stronger metallic substance could be made.

Historians are not exactly sure what happened to the Hittites, but the consensus is that they most likely morphed into the Neo-Assyrian Empire (912 to 612 BC).

It has also been discovered that China had first worked with steel around 403–221 BC. and the Han dynasty (202 BBC—AD220) melted wrought iron with cast iron, producing a steel composite.

Modern Day Uses

With the advent of the railroad construction boom in the 19th century and its ongoing requirement for metal to make the tracks, a supply issue was materializing. The process was slow and tedious since there wasn’t any automatic process to fill the need.

Enter the Steel Mill

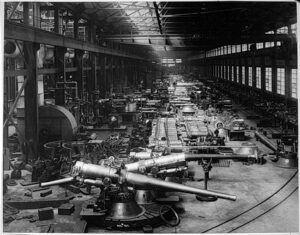

Steel mills provided the raw materials for many of the world’s most important products. Since the first mill opened in the early 1800s, they were constantly improved and adapted to meet the needs of the times.

These manufacturing plants have helped build skyscrapers, bridges, and countless other structures. They have also been instrumental in the development of new technologies, solving railway construction issues to assembly lines for other products.

There was no time more profitable for the steel mill than during the industrial revolution which began in the nineteenth century and up to the mid-twentieth century.

And there wasn’t a company more notable to achieve the country’s manufacturing demand than Bethlehem Steel, which provided the product for 125 years starting in 1887.

How Steel is Made

Steel does not grow out of thin air. It begins with the mining of iron ore, which then has to be combined with the element carbon via a blast furnace. Let’s get ma more involved in understanding how this process works.

Mining the Iron Mineral

It all begins with the mining of iron ore. An ore represents a mineral from here a valuable asset can be extracted.

Once it is taken out from the quarry, the ore is melted and purified (removing impurities from the ore and leaving only the metal). This is done in a blast furnace.

Enter Carbon

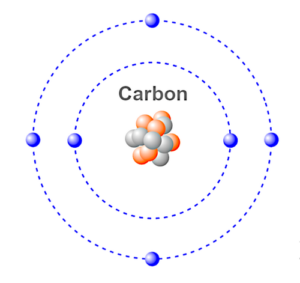

Carbon is an element in the Periodic Table that has an atomic number of six, with four electrons in its outer shell and two electrons in its inner shell.

Atoms that have less than eight electrons in their outer shell, (called the valence shell) tend to look for other atoms to bond with so that their outer shells can stabilize the atom by balancing the shell to eight electrons. This is based on the Octet Rule.

Iron has eight electrons in its valence shell, so if you bond the carbon atom that has six valence electrons with the iron atom, you have a molecule of two different atoms which forms steel.

It is essential to ensure that the correct amount of carbon is used with iron, approximately 0.04% so that the resultant product is that of steel.

If the wrong amount of carbon is mixed with iron, a different product will be produced such as cast iron or wrought iron – both of these are not efficient to render steel.

When is Carbon Added to Iron?

For steel, the combination of the two elements is done while the iron metal is liquid hot, which then alters the iron’s properties to change to that of steel.

Steel subsequently becomes an alloy (a metal made by combining two or more metallic elements) of iron and carbon. This causes a distortion of the crystalline lattice structure of iron and subsequently enhances the metal’s strength; specifically, it increases the metal’s tension and compression properties.

The Manufacturing Process

A breakthrough for manufacturing steel via an automated process materialized in 1856 when Henry Bessemer found a way to manufacture steel quickly. Bessemer’s steel production process is what inspired the Industrial Revolution.

It was the first cost-efficient industrial process for the large-scale production of steel from molten pig iron, by taking out impurities from pig iron using an air blast.

Adding Carbon Produces a Variety of Iron Alloys

As previously mentioned, when mixed with carbon, the iron’s characteristics will be changed, allowing a variety of different types of metal alloys to be created. It all depends upon the amount of carbon that is added to it. Let’s take a look.

Wrought Iron

Photo: © SMS

Wrought iron is softer than cast iron and contains less than 0.1 percent carbon and 1 or 2 percent slag.

It was an advancement over bronze and began to replace bronze in Asia Minor by the 2nd century BC. Because iron was far more plentiful as a natural resource, wrought iron was used for a wide variety of implements as well as weapons and armor.

Cast Iron

Cast iron is an alloy of iron that contains 2 to 4 percent carbon, along with smaller amounts of other elements, such as silicon, manganese, and minor traces of sulfur and phosphorus. These minerals are nonmetallic and are referenced in the industry as slag. Cast iron can be easily molded into a desired shape, known as casting. and has been used to make decorative fences and other aesthetic forms.

Cast iron facades were invented in America in the mid-1800s and were produced quickly, requiring much less time and resources than stone or brick. They were also very efficient for decorative purposes, as the same molds were used for many buildings and a broken piece could be quickly remolded. Because iron is powerful, large windows were utilized, allowing a lot of light into buildings and high ceilings that required only columns for support.

Steel

Steel is an alloy made from iron that usually contains several tenths of a percent of carbon, which increases its strength and durability over the other forms of iron, especially in tensile strength.

Strictly speaking, steel is just another kind of iron alloy, but has a much lower carbon content than cast iron, and about as much carbon (or sometimes slightly more) than working iron, with other metals, frequently added to give it additional properties.

Most of the steel produced today is called carbon steel, or simple carbon, although it can contain metals other than iron and carbon, like silicon and manganese.

Stainless Steel

The steel alloys mentioned above have carbon integrated within them, but stainless steel uses chromium as its alloying element. The result is that each produces a very different result when it comes to corrosion resistance. Stainless steel is much more corrosion-resistant.

Galvanized Steel

Besides incorporating the general benefits of steel, galvanized steel has an added strength of corrosion resistance, by integrating a zinc-iron coating. The zinc protects the metal as it provides a barrier to corrosive elements in the enviornment.

Summary

The advantages of steel are numerous, from great tensile and compression strength to the speed of manufacturing to low cost, it is the metal of choice in construction when compared to iron.

Although iron and steel appear to be similar, they are two distinct materials that have specific characteristics and qualities. Iron is a pure mineral and steel is an alloy material that contains a percentage of carbon. Depending on the amount of carbon mixed with iron, different products emerge, and this includes creation of steel.

Steel is a far stronger material and there is no better metal at this time that is used when strength and cost are major factors.